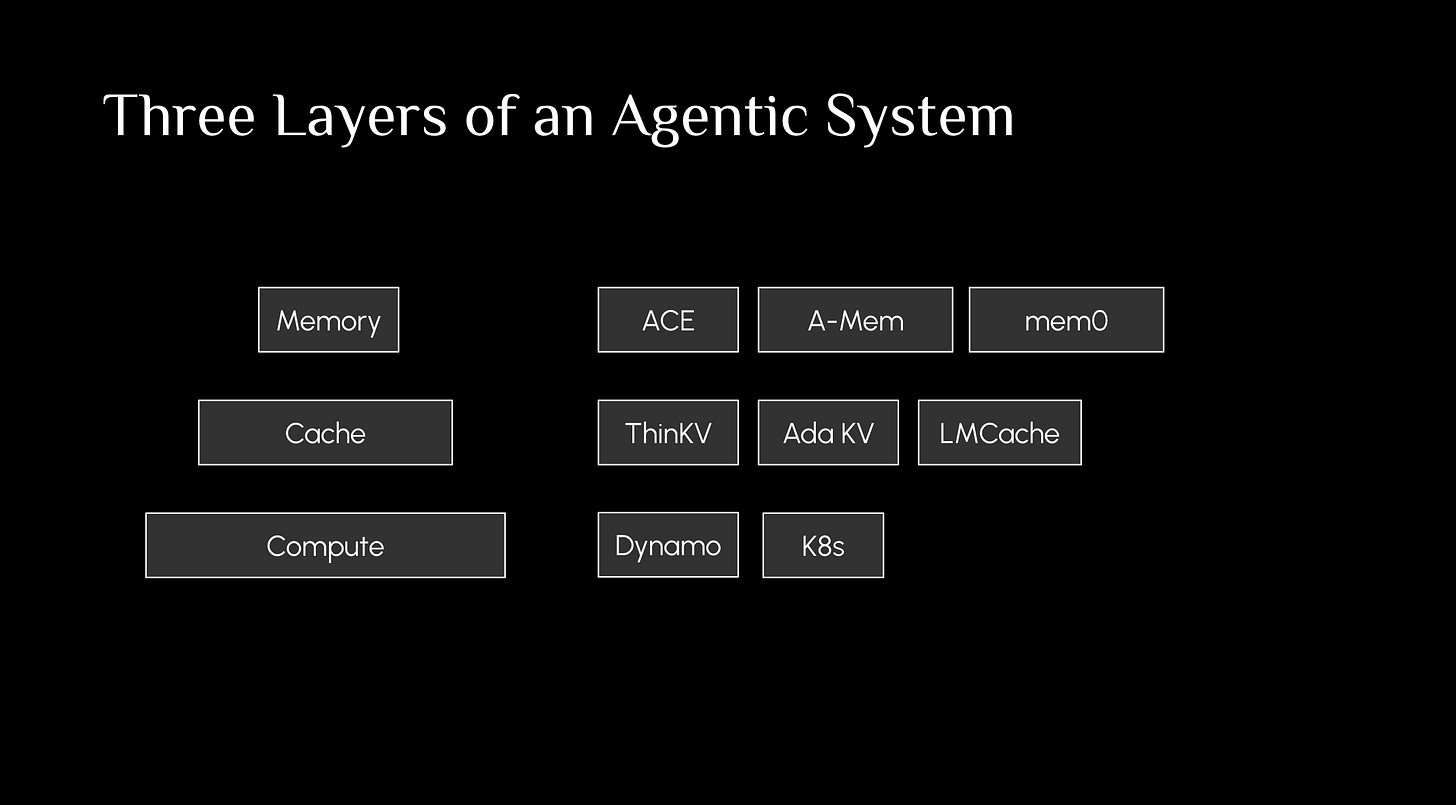

AI Agent Systems: Stateful Memory, Cache, and Orchestration

Part 1: stateful memory

Stateful memory is for improving model accuracy and capability, such as solving math problems.

Just read the following four papers

mem0

letta

A-Mem

[Most recent] ACE: https://arxiv.org/pdf/2510.04618. Below is a video on ACE

Part 2: cache

Caching doesn’t improve a modal’s capability — it is purely for saving cost and improving speed. For example, KV cache lets you avoid recomputation of past tokens, reducing latency. Further, Agentic Plan Cache saves your output (plan template) which you can directly re-use when encountering a similar prompt.

ThinKV: https://arxiv.org/abs/2510.01290

KV Cache resue: 1) Modular attention reuse for low-latency inference 2024. 2) CacheBland 2025

Compression: Shiyang Liu et al. Rethinking machine unlearning for large language models. arXiv:2402.08787, 2024.

Offload: {InfiniGen}: Efficient generative inference of large language models with dynamic {KV} cache management. 2024

Part 3: Ochestration

Nvidia Dynamo

This article comes at an opportune moment, effectively detailing essential AI agent mechansims. Thank you.